This post is part of the Social Media Lab #ELXN43 Transparency Initiative. It’s part two of a two-part post that summarizes our ongoing research on the role of social bots and trolls in our public discourse. (Part 1 can be found here.) The post is an abridge version of Dr. Anatoliy Gruzd’s recent talk, “FakeNews” Travels Fast — How Social Bots and Trolls Are Reshaping Public Debates, at the Dean’s Speaker Series at Ted Rogers School of Management on Oct 7, 2019. You can find the SlideShare here and the video here.

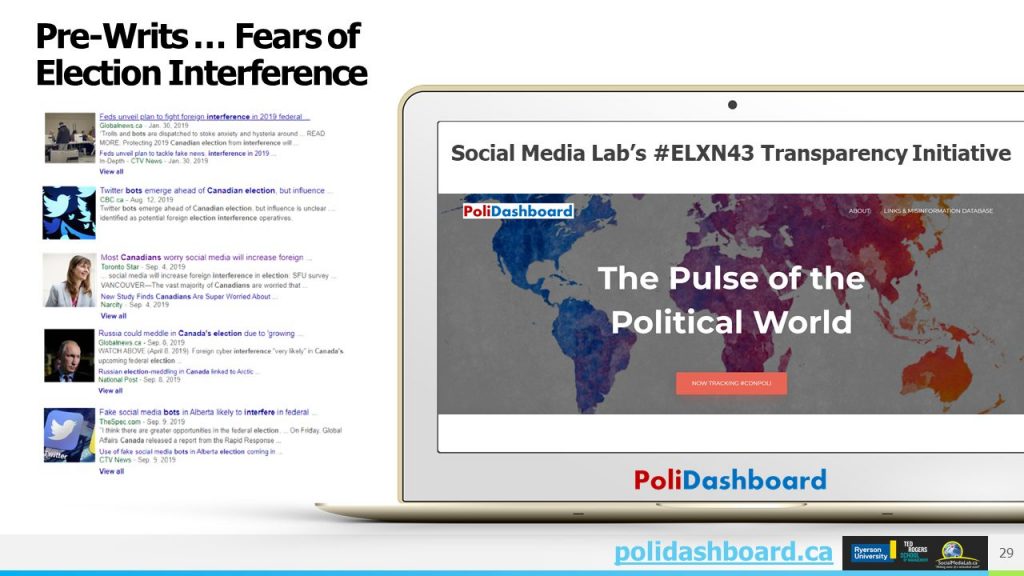

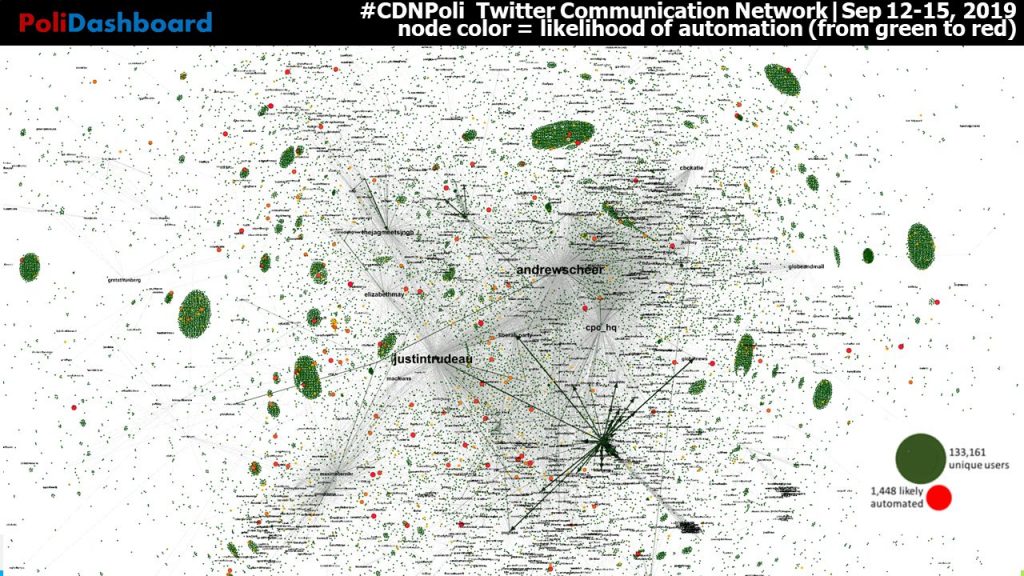

Leading up to the 2019 Canadian Federal Election in Canada there were a lot of press and social media chatter about possible political interference from foreign (and domestic) actors. To help bring more transparency to who is trying to influence our vote online, along with the help of the Social Media Lab’s Co-director, Philip Mai and our team of developers and analysts, we launched the Social Media Lab’s Election 43 (#ELXN43) Transparency Initiative. As part of this initiative, we created PoliDashboard, a new data visualization tool designed to help voters, journalists, and campaign staffers to monitor the health of political discussions in Canada. It analyzes publicly available data from Twitter and Facebook to spot misinformation and the use of automation (such as the use of political bots).

PoliDashboard consists of three main modules:

- a #CNDPoli Twitter Module that provides near real time analysis of the #CDNPoli Twitter stream to flag the presence of misinformation…aka ‘fake news’ being shared on Twitter and the use of automation (aka…political bots).

- an expert-curated Links & Misinformation Database containing URLs shared on #CNDPoli plus a compendium of blacklisted URLs known for disseminating hoaxes and disinformation (as documented by trusted sources such as journalists & other academic researchers),

- a Facebook Political Ads Module featuring information about political advertisers and the ads they are running on Facebook.

Working together, these three modules of PoliDashboard make use of publicly available social media data to add more transparency to our online political discourse.

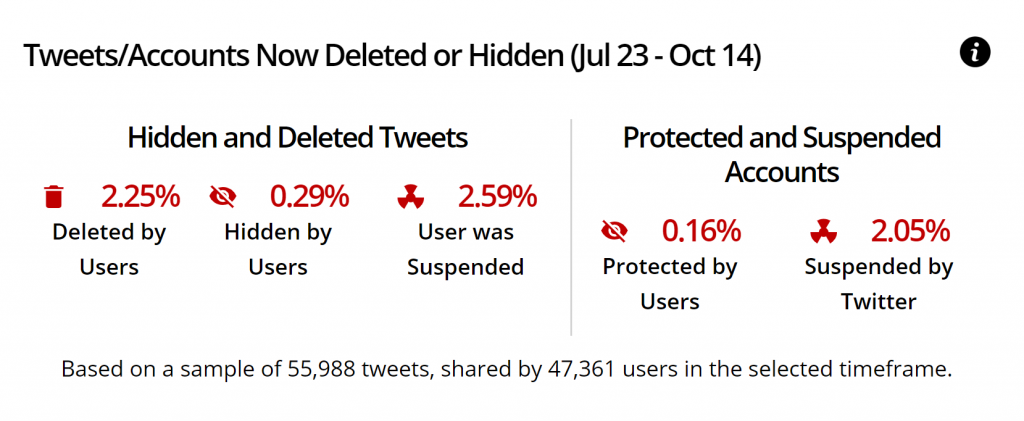

Deleted Tweets and Accounts

One metric the dashboard can tracks is how fast and how often Twitter is able to suspend or remove accounts and contents that violates their platform manipulation policies such as spam, coordinated activity, fake accounts, etc… For example, based on our random sample of 55,988 tweets mentioning #cdnpoli between July 23 – October 14, 2.25% of tweets have been deleted (likely by users themselves), and about 2.59% of tweets disappeared because a user has been suspended. Keeping track of this information gives us baseline counts to follow and compare over time.

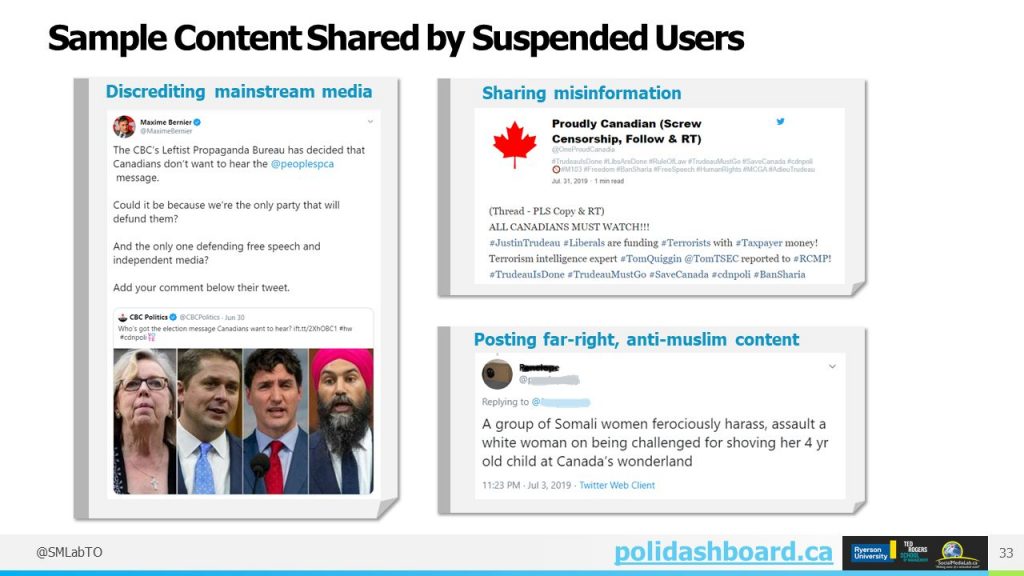

With Polidashboard, we are also able to independently examine the types of content that suspended accounts tended to share while they were active. This helps us as researchers and the public understand who may potentially be behind anti-social campaigns during this election period and to learn about their strategies.

For example, many of the Now suspended Accounts frequently shared content that Tries to discredit mainstream media , shared misinformation such as “Liberals are funding terrorists”, and posted far-right and anti-Muslim content.

Blacklist Sites

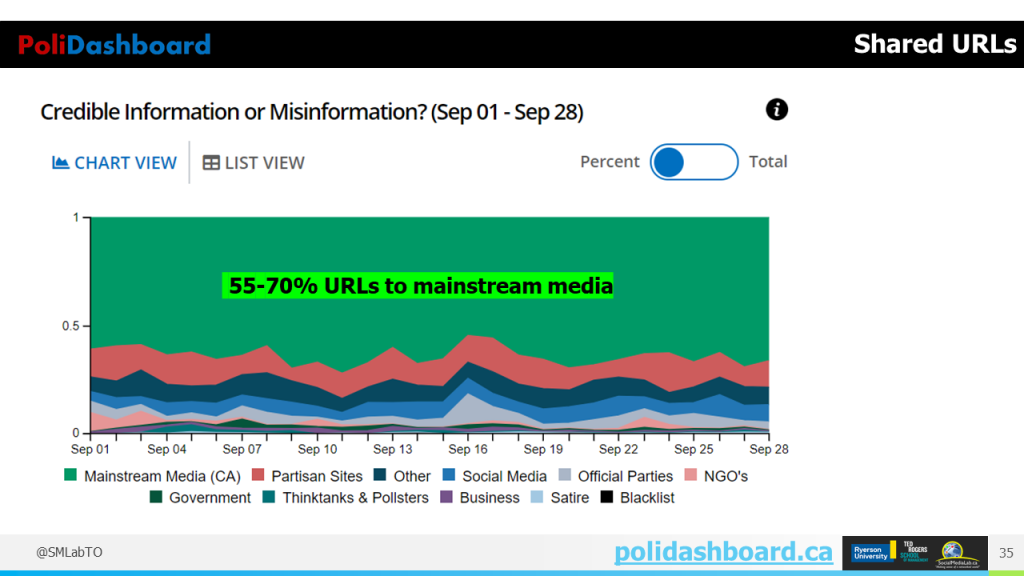

To understand what types of content and news sources Canadians are sharing on Twitter, we are classifying URLs shared in tweets into one of 11 categories including: mainstream media, government, political parties, partisan sources or known blacklisted sites such as impostors, misinformation and malicious resources. (Click here for a detailed description of each of the 11 categories.) Thus far, our data shows that the majority of URLs being shared are directing people to credible mainstream sources. For example, in September, 55-70% of web links shared with #cdnpoli led users to mainstream media sources.

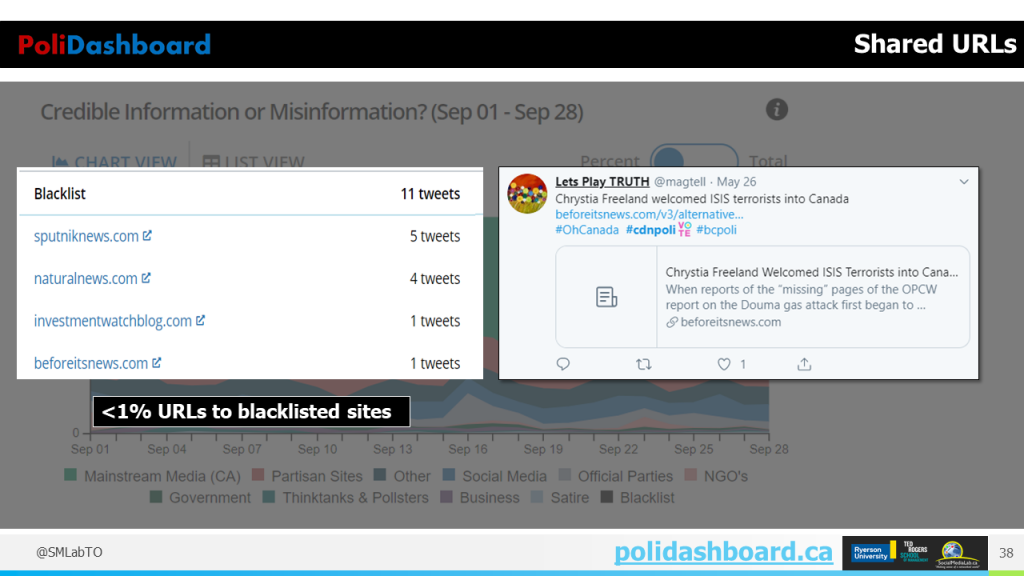

Links to partisan sites made up a smaller proportion of information sources that are being shared on Twitter (ranging from 7 to 18%). And only a small fraction of these domains lead to hyperpartisan sites such as the Friends of Science Calgary blog (a site offering alternative views on the cause of climate change and which content is frequently shared by supporters on the right side of the political spectrum).

So far, we have only tracked a few tweets sharing content from known spreaders of misinformation. They are often disguised to appear as a mainstream news organization and may include articles on regular news and events with the intent to mislead (including known “bad actors”, based on lists compiled by Politifact or Buzzfeed News).

Bot Detection with Botometer

Next, let’s turn to detecting social bots on Twitter as they have been associated with past misinformation campaigns. By “social bots”, we mean software designed to act on the Internet with some level of autonomy. Not all bots are “bad”; we are interested in studying bots designed to amplify and spread misinformation, expose private information, artificially enlarge one’s audience and to influence public opinion.

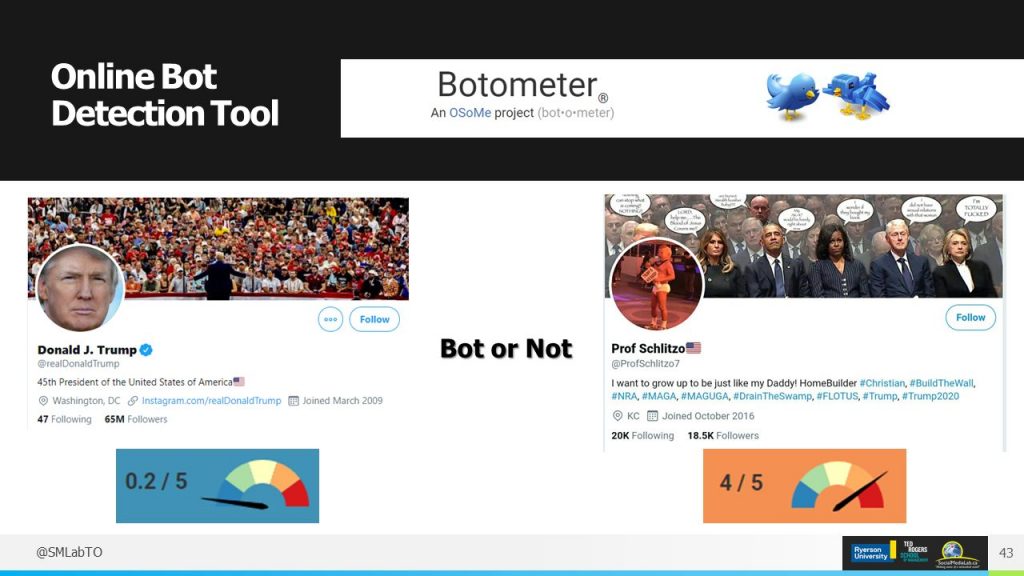

The datasets of tweets we are studying is large, and still growing, so we rely on a third-party tool called Botometer to check each account automatically. Botometer scores each account between 1 and 5 to determine how likely an account is to be automated. These scores are assigned based on a number of factors, such as when the account was created, how often it posts and who else it is connected to it on Twitter.

Using Botometer within Polidashboard, we can display the probability that an account is completely automated (i.e., engaged in bot-like behavior).

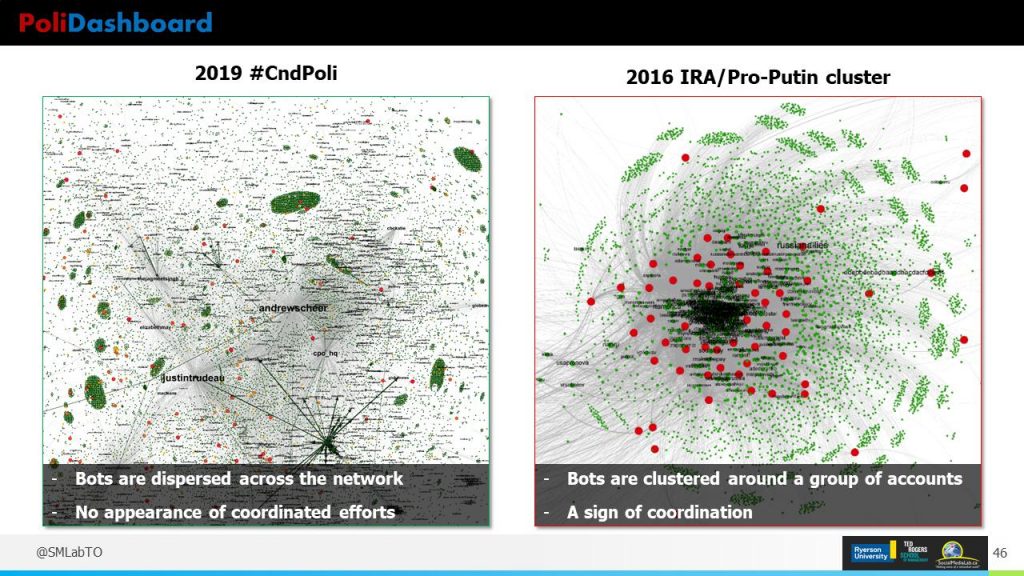

Using this information, we can then identify and study behavior of bot-like accounts in a communication network, similarly to what we did in our analysis of the 2016 IRA Misinformation Campaign. The goal is to see if/how the bots and other accounts might be trying to influence political discussions in this election cycle, and whether there is any evidence of a clustering of accounts in the network as that is one of the possible signs of a coordinated attack on Twitter.

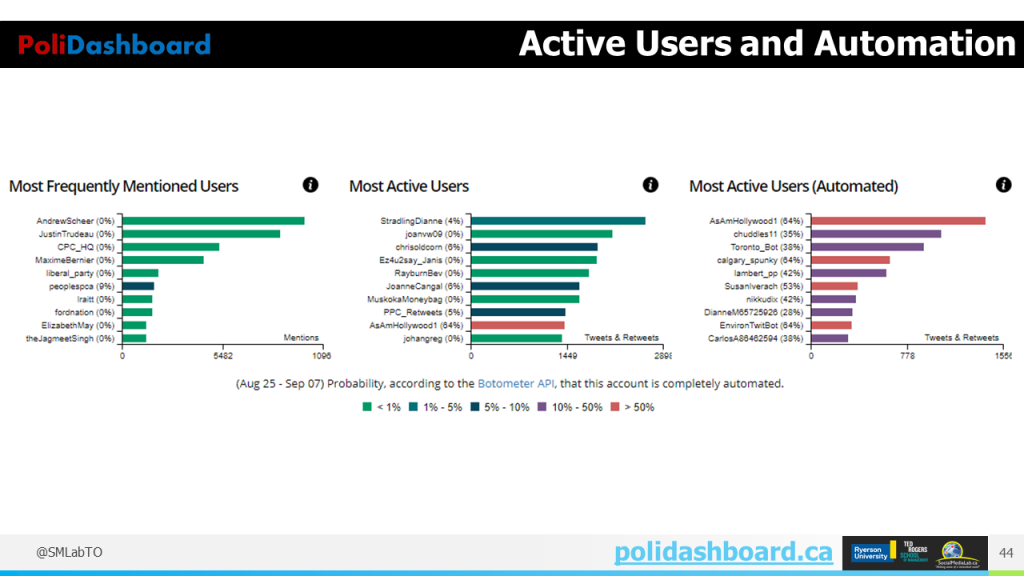

Our preliminary analysis shows that only about 1% of accounts that recently used the #cdnpoli hashtag were classified as likely to be bots; they are located throughout the network and are not concentrated around a particular group of accounts. So, we may conclude that, while bots are present in the Canadian political discussions on Twitter, they are not having a strong impact because they are dispersed.

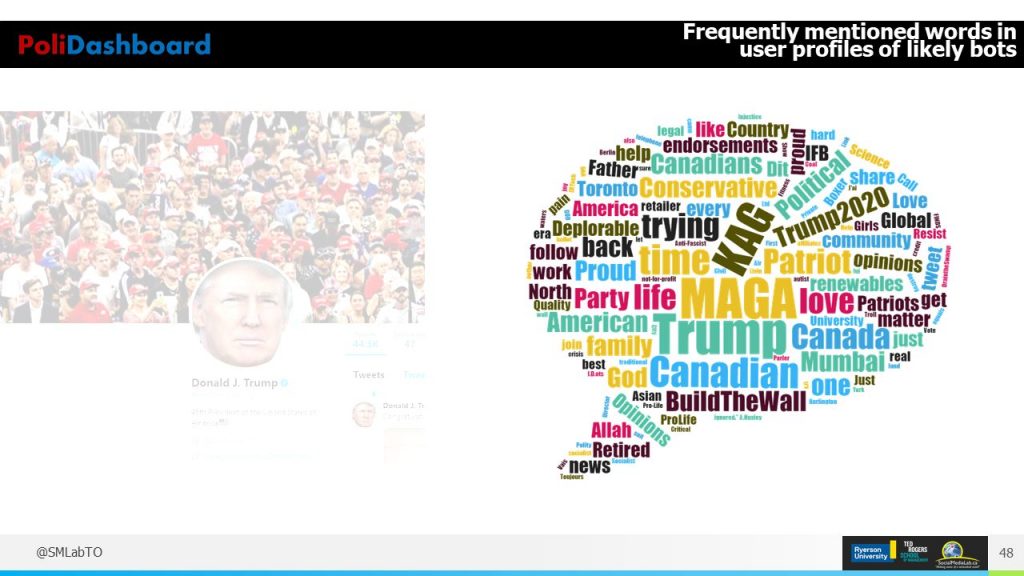

While we didn’t find evidence of coordination among bots in this network, we did notice that many active bot-like accounts also appear to be Trump supporters. Their user profile descriptions contain words like #MAGA (Make America Great Again), #KAG (Keep America Great), BuildTheWall, or Trump. What makes this interesting is the fact that the #CDNPoli hashtag is supposed to be about Canadian politics, and yet we see some pro-Trump accounts involved in the conversation. This makes you wonder why Trump supporters are so interested in Canadian politics.

Trolls Detection with Communalytic

Our analysis shows that we should also pay attention to another type of political actors on Twitter – the “trolls”. The defining characteristic of a “troll” is that they post insulting and bullying messages to provoke others. While bot accounts are fully (or partially) automated, Internet trolls are usually real people who may control one or more accounts. While bots may engage others by reposting their messages or auto-replying, trolls will often engage others by ceaselessly attacking and arguing with them. Sometimes trolling is done for fun, but it may also have a more sinister agenda.

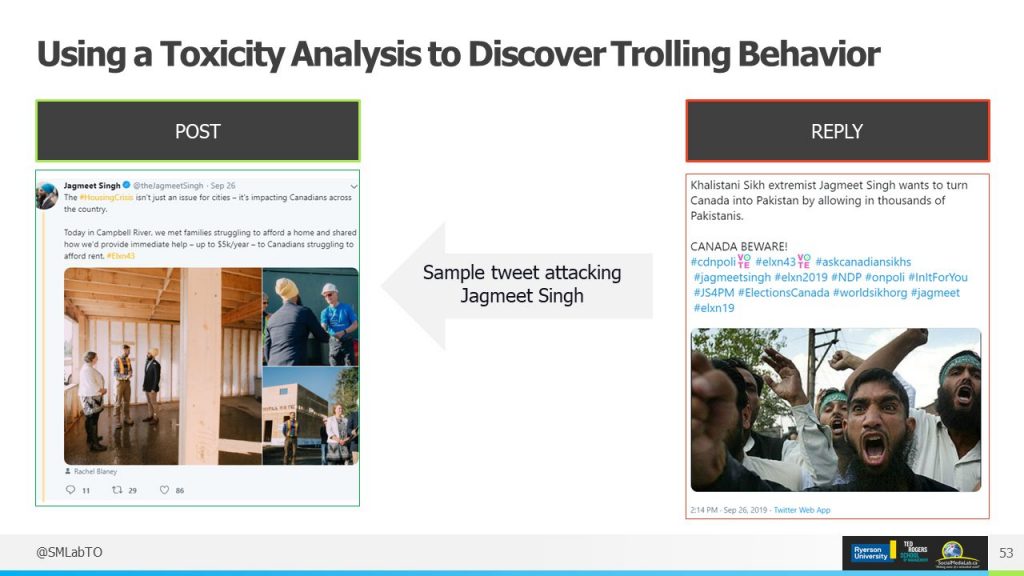

An example of troll-like behaviour is the following post replying to a Jagmeet Singh tweet, calling him a religious “extremist”.

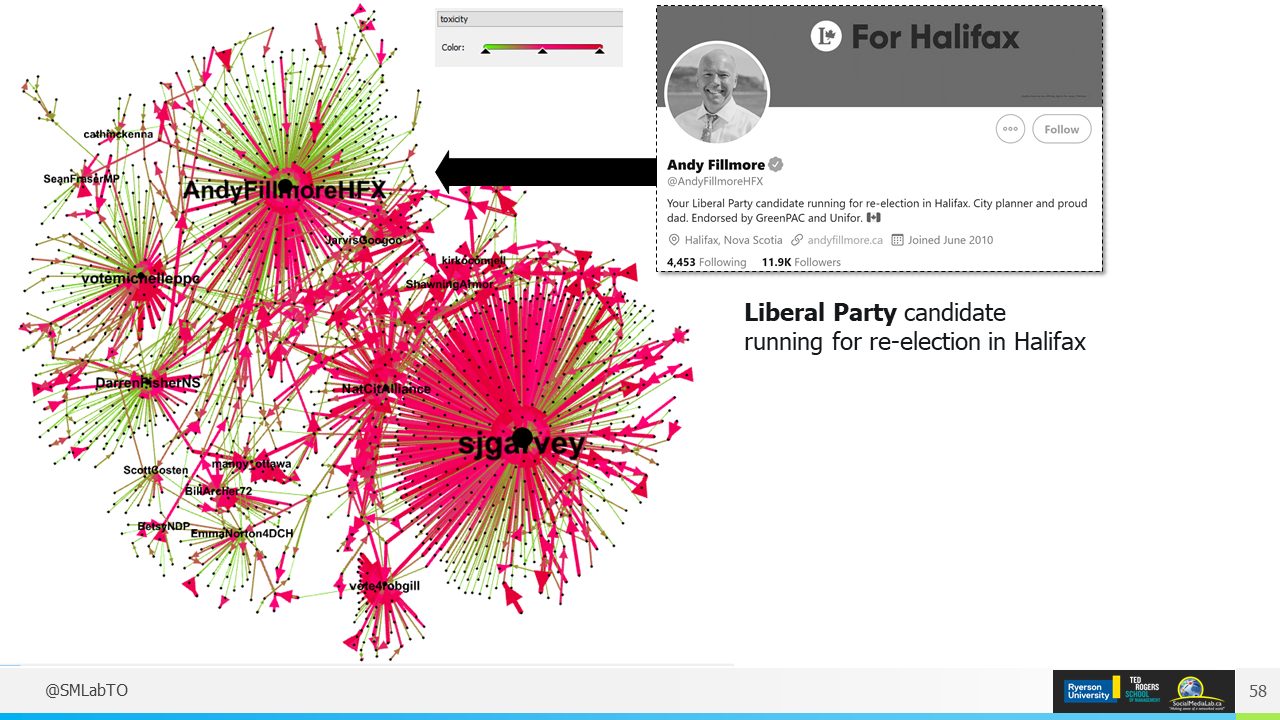

To detect and measure the prevalence of troll-like behaviour on Twitter, we rely on a tool that we have developed in house at the Social Media Lab called Communalytic. Communalytic is a research tool for studying online communities and online discourse. It can collect public data from various social media platforms including Reddit, Twitter, and Facebook/Instagram (via CrowdTangle) and can analyze the data using advanced text and social network analysis techniques. One of the unique features of Communalytic is that it can analyze the content of messages to pinpoint toxic and anti-social interactions in online discussions using a machine learning algorithm from Google. By using automated content analysis to enrich communication network data, we are able to detect toxic types of attacks and highlight them in a network visualization for further analysis.

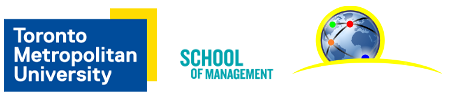

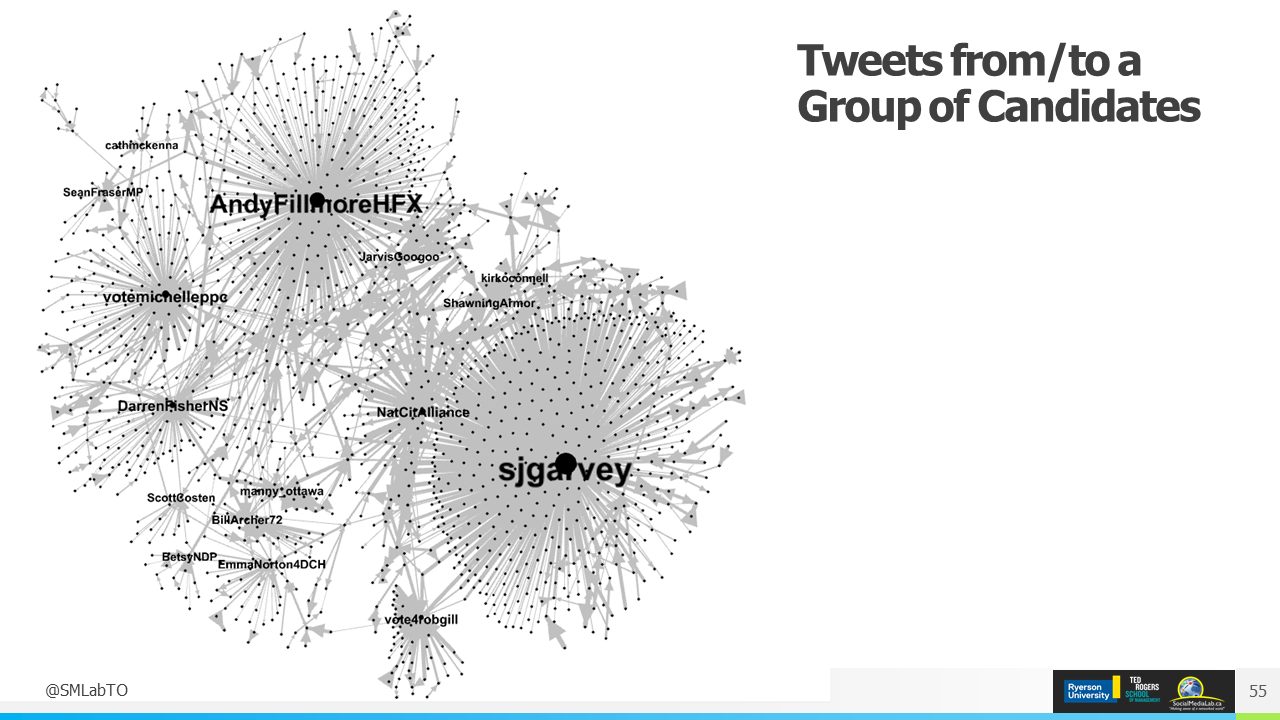

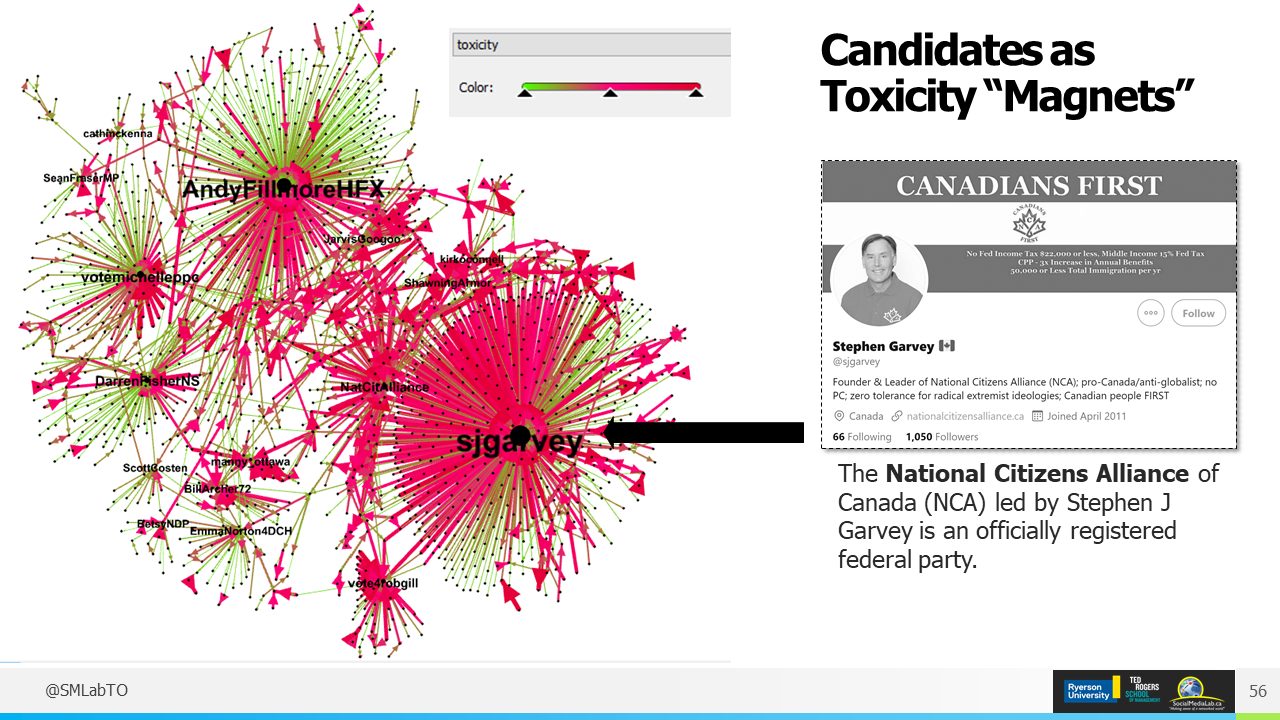

Consider the following sample network that represents interactions on Twitter between a set of candidates running in this election and other Twitter users. By automatically analyzing the content of these exchanges and adding that information to the edges (links) in a network, we can quickly and easily identify toxic interactions and users in any online conversation.

This network visualization shows how one of the candidates from the National Citizens Alliance party (a small federal party) is receiving a lot of toxic messages. We also see from this visualization that toxic messages and trolls don’t discriminate based on party affiliation. For example, a cluster around a Nova Scotia Liberal candidate, Andy Fillmore (see the cluster in the top left corner of the network), shows about an even number of civil and toxic exchanges.

Such anti-social behavior towards politicians and other Canadians is likely to discourage them from contributing to the digital public sphere in a meaningful way. This is problematic as it goes against the early promise of social media to give voice to voiceless and turn the world in a connected village.

Recap

In this blog post and the previous posts in the series, we have shown what a known misinformation campaign looks like, how we can detect bots and how to identify trolls. If you like to see the full presentation, including a short discussion of where we can go from here with journalist and TV anchor Tom Clark, you can watch the following video.

By: Anatoliy Gruzd and Philip Mai, edited by Donald Patterson